Cultivating Giants to Stand On: Extending Kubernetes to the Edge

Written by Roman Shaposhnik, Project EVE lead and Co-Founder &VP of Products and Strategy at ZEDEDA

This content originally ran on the ZEDEDA Medium Blog – click here for more content like this.

Kubernetes is more than just a buzzword. With Gartner predicting that by the end of 2025, 90% of applications at the edge will be containerized, it’s clear that organizations will be looking to leverage Kubernetes across their enterprises, but this isn’t a straightforward proposition. There’s much more involved than just repurposing the architecture we use in the data center in a smaller or more rugged form factor at the edge.

The edge environment has several major distinctions from data centers that must be addressed in order to successfully leverage Kubernetes:

- Diversity: Inherent diversity of technology and the related domain expertise required

- Scale: Unprecedented scale and geographic distribution of deployed edge nodes

- No perimeter: No physical or network perimeter requires a zero-trust security model

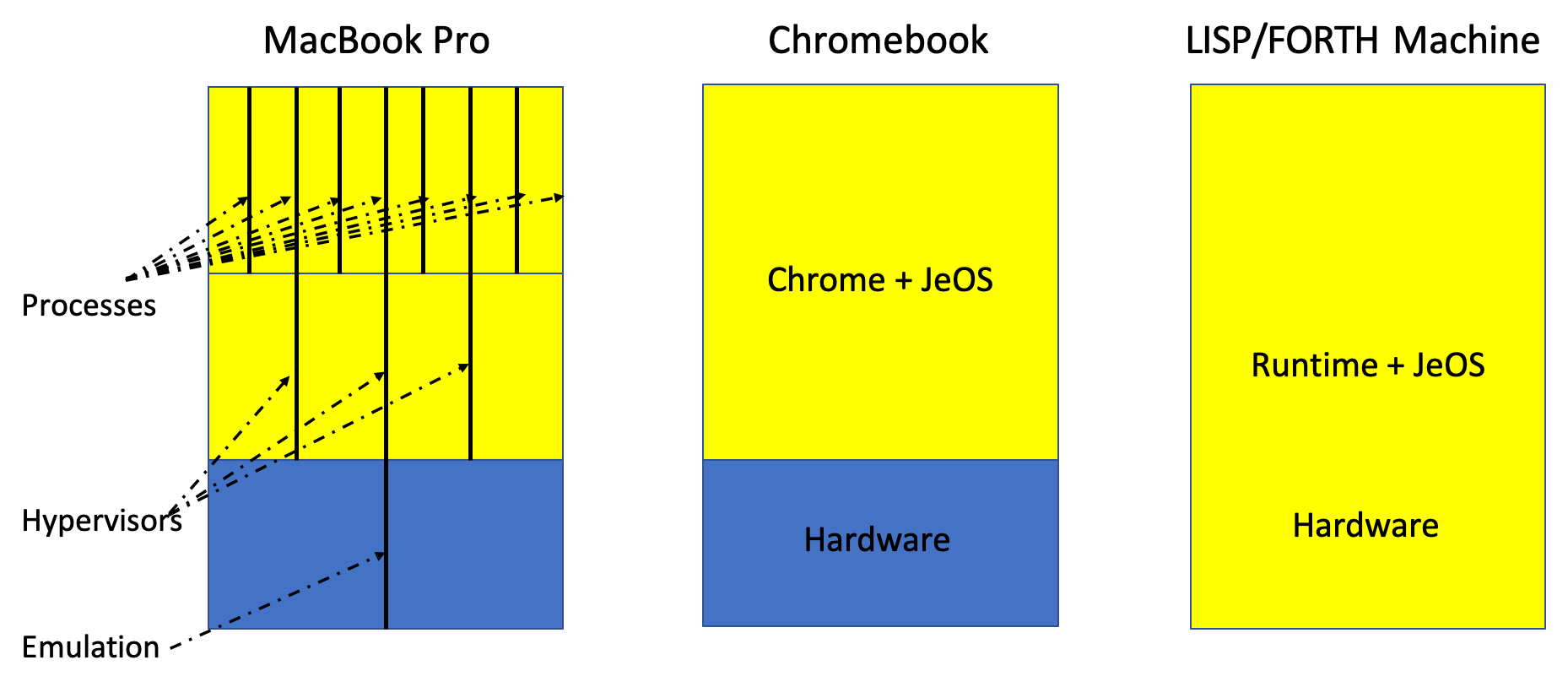

Computers, whether massive data center machines or small nodes on the smart device edge, are essentially three parts — hardware, operating system (OS) and runtime — running in support of some sort of application. And within that, an operating system is just a program that allows the execution of other programs. We went through a time in the 1990s where it was believed that the OS was the only part that mattered, and the goal was to find the best one, like the OS was a titan with the entire world on its shoulders. The reality though is that it’s actually turtles all the way down! By this I mean that with virtualization, computers are not limited to just three parts. We can slice each individual section in many different ways, with hardware emulation, hypervisors, etc.

So how can we look at these building blocks in the most optimal way in 2020?

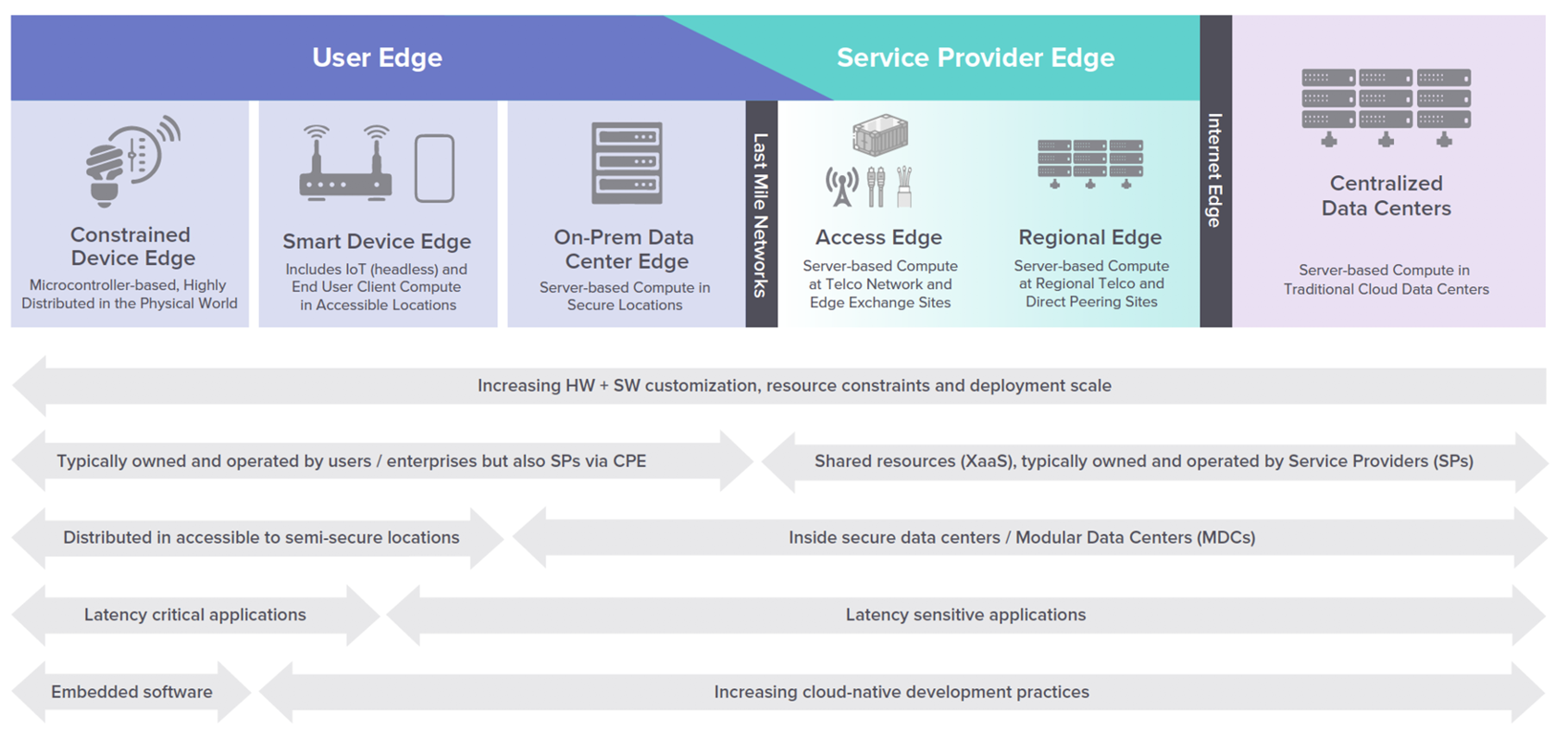

We first have to talk about where we find computers — the spectrum of computers being deployed today is vast. From giant machines in data centers doing big things all the way to specialized computers that might be a smart light bulb or sensor. In the middle is the proverbial edge, which we call the Smart Device Edge.

As we look at how to best run Kubernetes on the smart device edge, the answer is that it’s a triplet of K3s, some kind of operating system (or support for K3s) and some sort of hardware.

And so then, if we have K3s and we have hardware, what’s the best possible way to run K3s on hardware? The answer is a specialized operating system.

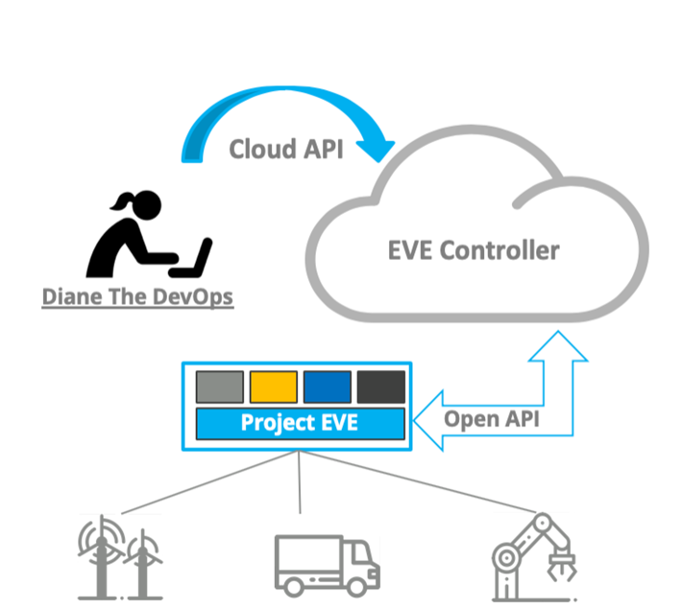

Just like the team behind Docker used a specialized operating system when they had to run Docker on a MacBook Pro — they created Docker Desktop, which is a specialized engine — like an operating system — that’s only there in support of Docker. And so for the smart device edge, we’ve created EVE, a lightweight, secure, open, and universal operating system built to address the unique security and scale requirements of edge nodes deployed outside of the data center.

What makes EVE different? It’s the only OS that enables organizations to extend their cloud-like experience to edge deployments outside of the data center while also supporting legacy software investments. It provides an abstraction layer that decouples software from the diverse landscape of IoT edge hardware to make application development and deployment easier, secure and interoperable. The hosting of Project EVE under LF Edge ensures vendor-neutral governance and community-driven development.

Ready to learn more and to see EVE in action? Check out the full discussion.